What kind of software will AIs run? This is of some interest, because it will tell us how much the current flowering of parallel hardware will actually get us toward human equivalent processing power. Amdahl’s Law holds: If the task of being intelligent is strongly serial, all those processors won’t help much. If it’s parallelizable, they will, and that means that the hardware for AI is basically here.

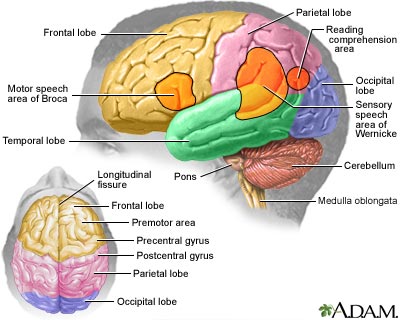

One good reason to think that parallelism will work is that the brain is enormously parallel. What seems to be going on in there is vastly parallel pattern-matching. Indeed, people working on neural-like forms of AI, such as Edelman et al at The Neurosciences Institute, are specifically looking for programmers to work on GPUs.

What if we’re taking a more conventional approach to AI? One thing to remember is that visual processing is a significant chunk of what our brains do, and if you combine that with visualization and geometric thinking, you get a large amount of the kind of computing that GPUs were specifically designed to do. Other parts of AI turn out to be amenable to parallelization in a number of ways. One of the most fundamental techniques is search, in forms ranging from the tree searches of chess programs to the populations of genetic algorithms. Modern AI uses a lot of statistics and similar numerical techniques borrowed directly from scientific computing — which is where a lot of the GPGPU activity came from in the first place.

So I have to say that in the end, I agree with Minsky — not in detail, as far as specific estimates of MIPS are concerned, but in the broad spirit of the notion that the hardware is here: let’s get to work on the software.