There was a gratifyingly large response to last Friday’s post Acolytes of neo-Malthusian Apocalypticism.

Several of the commenters seemed to think I was trying to refute the LtG model, but that would require a whole book instead of one blog post. I consider LtG to have been demolished in detail by people with a lot more expertise in economic modelling than I, more than three decades ago. My point was simply that Turner’s paper didn’t come close to showing what some people were claiming it did, and in particular did nothing to resuscitate LtG.

One commenter asks:

What’s the cheap replacement for cheap oil? Don’t you realize that spikes in oil prices have preceded the last 4 recessions?

Hmm. If this has happened 4 times, which one was because we ran out of oil?

Here are inflation-adjusted oil prices over the period:

What struck me about the graph was that oil price shocks seem to correlate with gloom-and-doom fads. But most of the volatility is politically inflicted. After a spike to $150 last year it’s been hovering around $50 since. This is about twice the median of the previous 40 years, but the increase is due more to increased demand from Asian development than depletion.

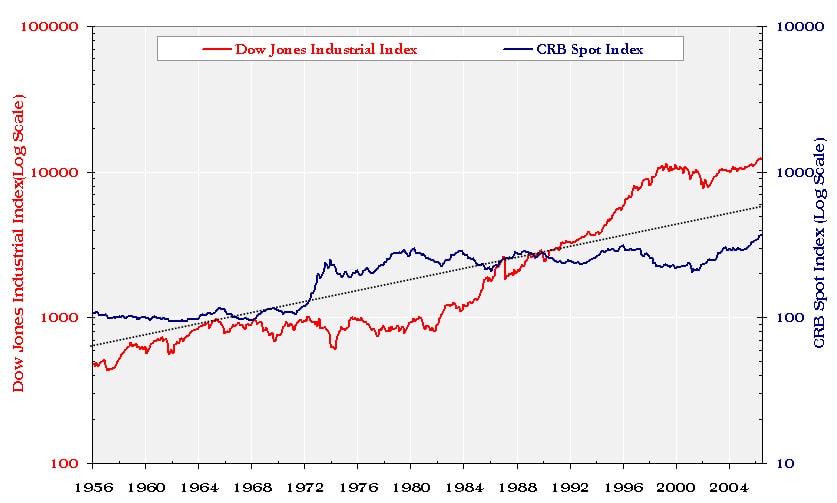

On the on the other hand, it is quite true that commodities in general are counter-cyclical to general market indicators, as shown here:

I would conjecture that the sharp run-up in commodities in the early 70s was one reason people were so willing to listen to LtG, but then they went flat for 30 years and you got things like the Simon-Ehrlich wager.

All of [Ehrlich’s] grim predictions had been decisively overturned by events. Ehrlich was wrong about higher natural resource prices, about “famines of unbelievable proportions” occurring by 1975, about “hundreds of millions of people starving to death” in the 1970s and ’80s, about the world “entering a genuine age of scarcity.” In 1990, for his having promoted “greater public understanding of environmental problems,” Ehrlich received a MacArthur Foundation Genius Award.” [Simon] always found it somewhat peculiar that neither the Science piece nor his public wager with Ehrlich nor anything else that he did, said, or wrote seemed to make much of a dent on the world at large. For some reason he could never comprehend, people were inclined to believe the very worst about anything and everything; they were immune to contrary evidence just as if they’d been medically vaccinated against the force of fact. Furthermore, there seemed to be a bizarre reverse-Cassandra effect operating in the universe: whereas the mythical Cassandra spoke the awful truth and was not believed, these days “experts” spoke awful falsehoods, and they were believed. Repeatedly being wrong actually seemed to be an advantage, conferring some sort of puzzling magic glow upon the speaker.

What’s the replacement for cheap oil? In the short run, efficiency and conservation (replace commuting with telecommuting) are the most most important substitution effects. If higher oil prices are sustained, we’ll see major new forms of exploration and recovery, as in this post I linked to before:

More recently, companies such as Royal Dutch Shell have developed ways to tap the oil in situ, by drilling boreholes that are thousands of feet deep and feeding into them inch-thick cables that are heated using electrical resistance and that literally cook the surrounding rock. The kerogen liquefies and gradually pools around an extraction well, where the oil-like fluid can easily be pumped to the surface.

The process involves no mining, uses less water than other approaches, and doesn’t leave behind man-made mountains of kerogen-sapped shale. And according to a Rand Corporation study, it can also be done at a third of the cost of mining and surface processing.

An affordable way to extract oil from oil shale would make the United States into one of the best places to be when conventional worldwide oil production starts its final decline. The US has an amount of oil in shale equal to about 25 years of oil supply at the world’s current oil consumption rate.

Also, notice we aren’t drilling for oil in the deep oceans at all, but improved technology (and higher prices) would make this possible at some point. E.g. we aren’t even looking at over half the planet yet. The US is 2% of the Earth’s surface (10 out of 510 million sq km). Assuming an even distribution, that means total shale oil on Earth is over 1200 years’ supply at current rates. In any practical sense, the amount of oil on Earth is a function of the technology you have to extract it.

In the longer run, we have plenty of uranium and thorium (see below), and practically limitless space-based solar power is within our technological capability to harvest if we only try.

Many commenters seemed to take the “finite resources” assumptions of LtG pretty much for granted, though. One goes so far as to point out:

A simple calculation (first done publicly by Isaac Asimov, as far as I know) shows you that if population grows just one percent per year without constraint, all the matter in the known universe will be in human bodies before 11,000 AD. So there is a limit to the resources available to us.

But this is a very simplistic notion of what LtG was actually trying to say. It wasn’t “the universe is finite so we can’t grow exponentially forever.” It was “there will be a major collapse of modern civilization in the 21st century due to resource depletion.”

It’s also a pretty puzzling exegesis of what I was trying to say, which wasn’t “the human race should continue growing exponentially forever” but “there won’t be a major collapse of modern civilization in the 21st century due to resource depletion.”

This isn’t to say that resources won’t be depleted — they will. But the way they get depleted is much more complex than the simple LtG model where we voraciously up at an increasing rate and suddenly run out and starve to death. In reality, resources tend to have a graduated difficulty of extraction leading to a more-or-less evenly rising supply curve. This means that the price will rise over time, encouraging efficiency, additional exploration, and hoarding among those who see that the stuff will be more valuable in the future. This leads to growth curves that more closely resemble the standard diminishing-returns sigmoid than LtG’s collapse model.

Furthermore, projections by real experts, such as population trends by mainstream demographers (which are in fact just such a sigmoid), take into account many more factors than LtG’s simplistic model. Note, for example, that for that majority of the world’s population that’s above subsistence level, declining resources do not mean declining birth rates: it’s just the opposite; the richer, the fewer children.

Nor is this to say that collapses won’t happen. But when they have, they have usually been due to government imposition of

pernicious interference that broke the normal market responses. This doesn’t mean necesarily one’s own government, of course, especially in the case of oil.

And finally, objecting to my assertion that government regulation had stifled innovation in the nuclear industry, one commenter writes:

“Compare today’s computers with 1970s ones and see how different the reactors might be today if the same kind of development had taken place.”

Yeah, right. This is the old “if cars had developed like computers, they would go 1000 mph and get 2,000 miles per gallon” argument. To which the response is “yes – and they would seat 100 people and be the size of a matchbox.”

The analogy is misleading, at best. It would be better to compare nuclear reactors to passenger jets.

I don’t understand why cars and jets are supposed to be a better example — cars and jets are highly regulated, and computers are not. Furthermore, the performance of jets improved up through about 1970 at a nice exponential rate, and levelled off with the 747 because it hit a sweet spot in the price/performance landscape (and because cheap energy peaked about the same time). The sweet spot is just below Mach 1, above which the cost per passenger-mile triples.

The reason computers improved so rapidly in price/performance terms is due to the fact that the physics don’t present the kind of “glass ceiling” that supersonic flight does for airplanes. So the key to analyzing the question of how well reactors could have improved over the period is to see just what kind of headroom they had in the design space. An overview of the possibilities can be seen here. In particular, integral fast reactors could achieve a 99% fuel burn-up, improving both fuel efficiency and waste production by a couple of orders of magnitude over the 1970s designs we’re still using:

The Integral Fast Reactor or Advanced Liquid-Metal Reactor is a design for a nuclear fast reactor with a specialized nuclear fuel cycle. A prototype of the reactor was built in the United States, but the project was canceled by the U.S. government in 1994, three years before completion.

In other words, the physics of fission has at least a factor of 1000 free upside in power/waste and is capable of using fuels that are 100-1000 times as abundant as current practice. Instead we get this:

We had a confluence of bad design decisions at TMI, some of them made by the U.S. Congress. U.S. law specifically prohibited using computers to directly control nuclear power plants. …

Now nuclear energy can be mighty dangerous and is not something to be messed with lightly, but another irony in this story is that nuclear power is actually pretty simple compared to many other industrial processes. The average chemical plant or oil refinery is vastly more complex than a nuclear power plant. The nuke plant heats water to run a steam turbine while a chemical plant can make thousands of complex products out of dozens of feedstocks. Their process control was totally automated 30 years ago and had an amazing level [of] safety and interlock systems. A lot of effort was put into the management of chemical plant startup, shutdown, and maintenance. The chemical plant control system was designed to force the highest safety. So when manufacturing engineers from chemical plants looked at TMI, they were shocked to see the low-tech manner in which the reactors were controlled and monitored. To the chemical engineers it looked like an accident waiting to happen.

… And for the next 29 years we didn’t build another nuclear power plant, leaving that mainly to the French and the Japanese.

Yes, progress does hobble to a halt on occasion. But it’s not because we’ve run out of territory. It’s because we’ve shot ourselves in the foot.