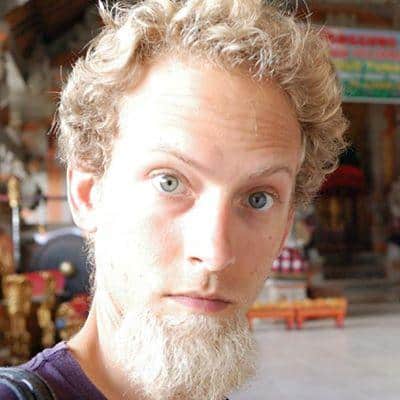

Presenter

Keenan Pepper, Salesforce

Graduate student, University of California, Berkeley, physics department. Lead Software Engineer at Salesforce

Summary:

Keenan Pepper proposes a research direction to address safety and alignment concerns in AI, focusing on embedded agents. Embedded agents exist within their environment, which can impact their cognition and introduce manipulation risks. Studying environments with embedded and intelligent agents is valuable for understanding potential dangers and exploring interpretability. Keenan highlights the need for a safe sandbox to study embedded agents before superintelligence is embedded. Interpretability is crucial, especially in games where success depends on revealing internal states to opponents. Finally, Keenan suggests creating an environment, possibly using a game-like setup, where agents can be trained to perform embedded tasks while revealing their internal states.