Presenter

Steven Byrnes, Astera Institute

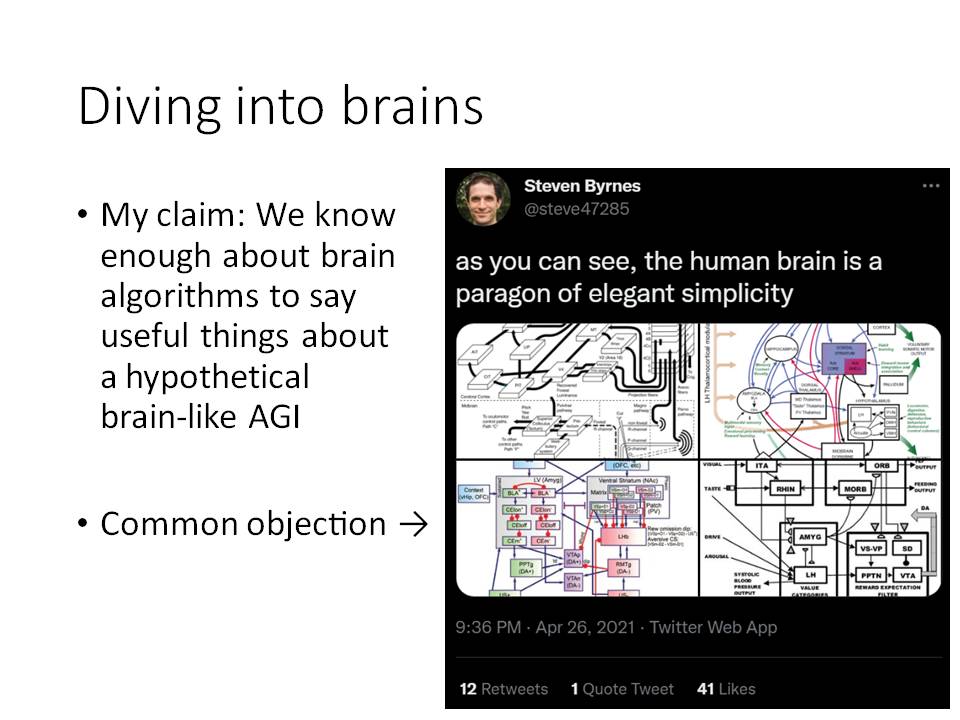

Steven Byrnes is a Research Fellow at the Astera Institute, where he is doing research at the intersection of neuroscience and Artificial General Intelligence Safety. His public writing on this topic is listed here. Before working on AGI Safety, he was a physicist in academia and industry. He has a Physics PhD from UC Berkeley...

Summary:

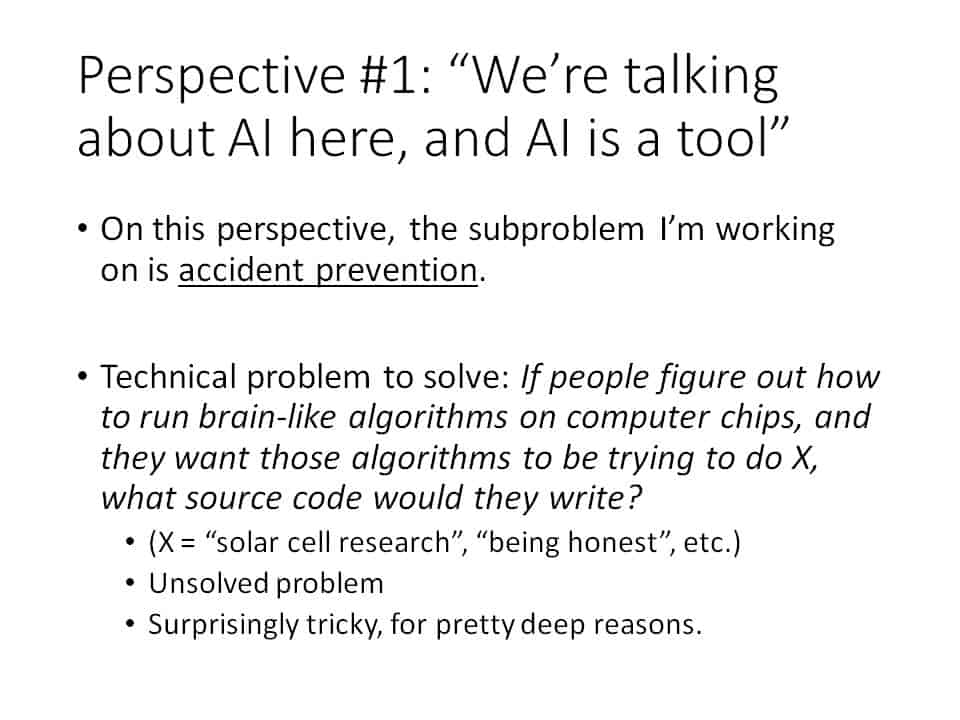

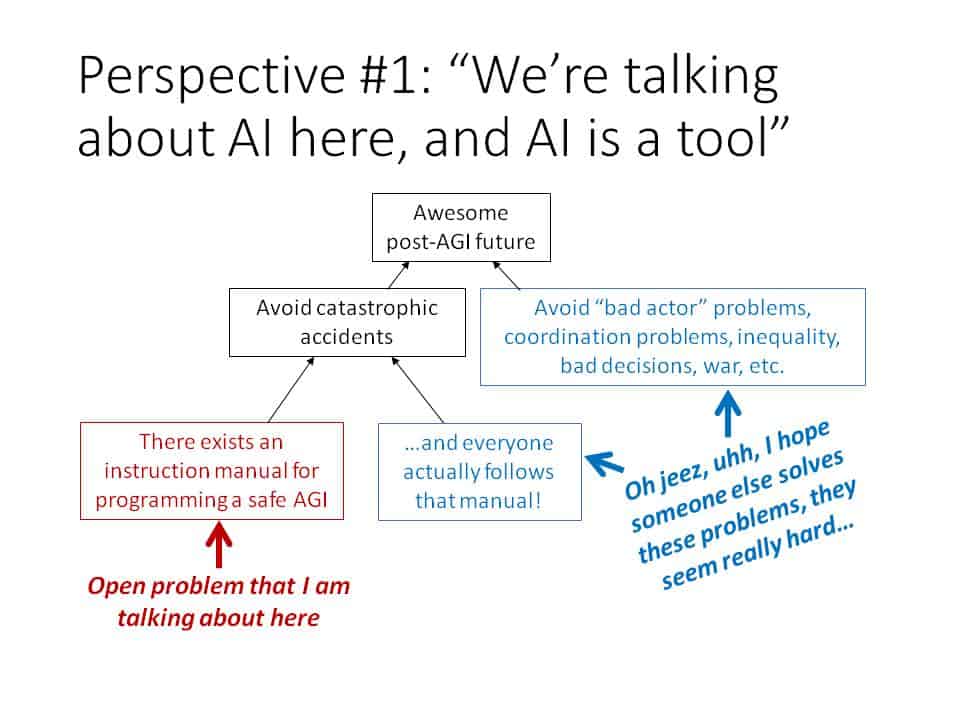

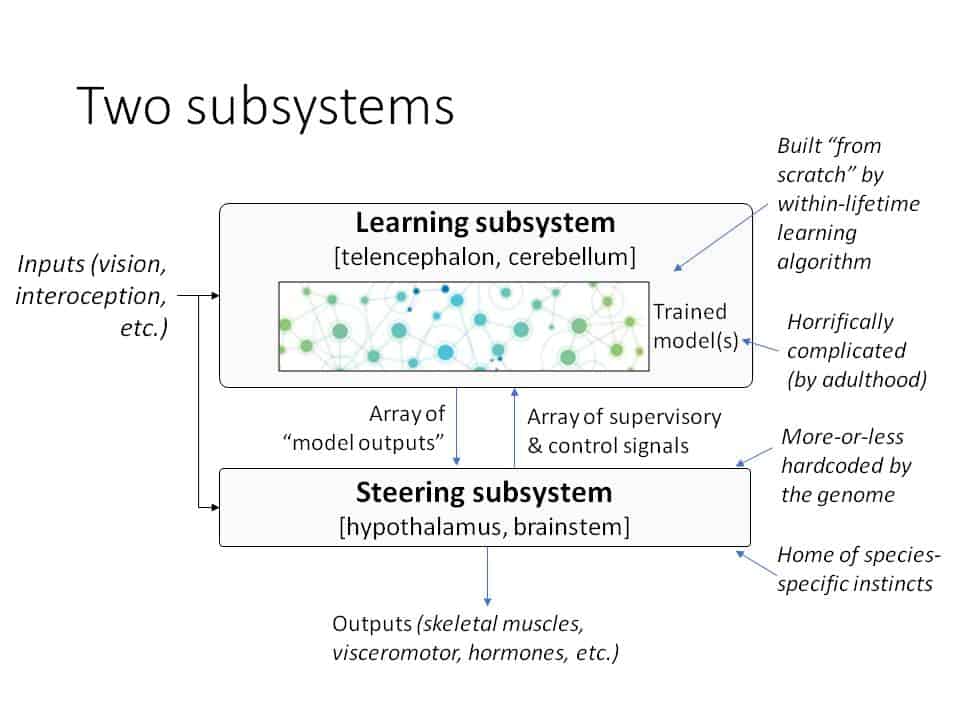

Sooner or later, researchers will probably figure out how to run brain-like algorithms on computer chips. When they do, computers will be able to understand the world, build and share knowledge, collaborate, figure things out, get things done, invent new tools and technologies to solve their problems, etc.—less like today’s AI, more like inviting a new intelligent species onto our planet. So how do we make sure that it’s a new intelligent species that we actually want to share the planet with? How do we make sure that they want to share the planet with us? I claim that we know enough about brain algorithms to clarify the nature of this problem and start making progress. I will argue for a picture in which ≈96% of the human brain is dedicated to running scaled-up learning algorithms; in which “brain-like AGI” is plausible in decades rather than centuries (and in particular, long before Whole Brain Emulation); and in which it is not only possible but indeed likely that future researchers will have figured out how to make powerful brain-like AGI while remaining perplexed about how to give those AGIs prosocial motivations. I will end with some open technical problems that seem likely to help.

Challenge:

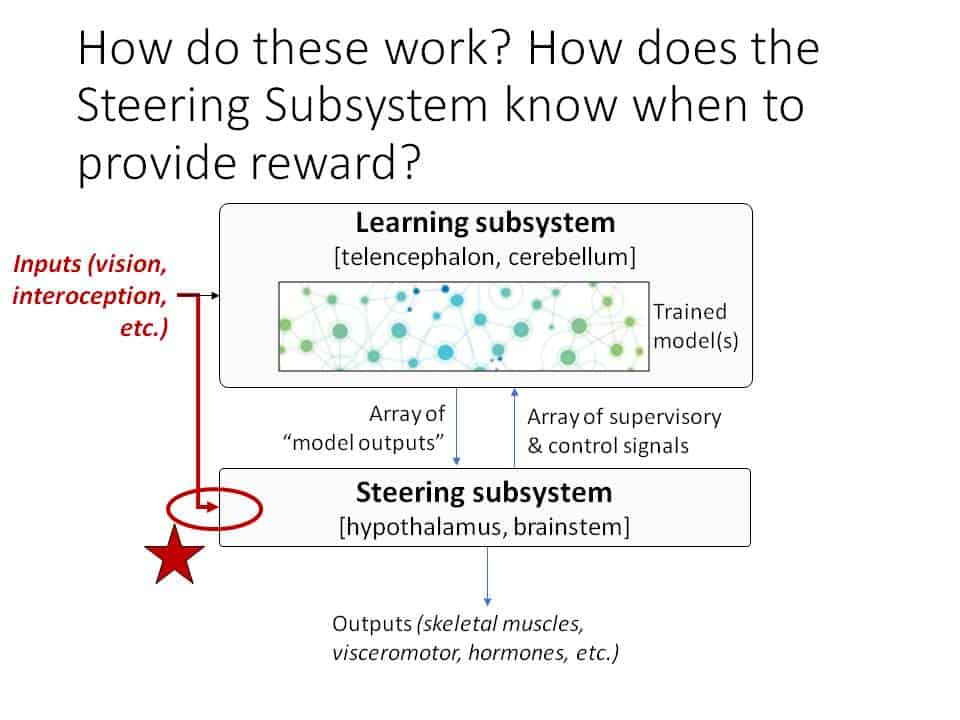

The brain famously does reinforcement learning, with the innate “reward function” involving things like “pain is bad” and “sweet tastes are good” and so on. There are countless papers investigating how reward signals cause updates to a learning algorithm via the dopamine system, but there is much less work on the question of exactly what the reward function is in the first place—a question centrally involving the hypothalamus and brainstem. For example, what kind of reward function would lead (in conjunction with life experience) to a desire for revenge? Or to a sense of fairness? Or wanting to hang out with the cool kids? I don’t currently have good answers to these questions, but I’m optimistic that answers do exist. However, I claim that those answers are (and must be) far more convoluted than anything I’ve seen in the literature, thanks to a kind of “symbol grounding problem” that needs to be addressed. Related to this question, I would very much like to see more work towards reverse-engineering the parts of the hypothalamus and brainstem involved in social instincts.