Presenters

Zhu Xiaohu, Center for Safe AGI

Xiaohu (Neil) Zhu is the Founder and Chief Scientist of University AI, an organization providing AI education and training for individuals and big companies in China. He got a master degree on AI in Nanjing University with a background on algorithmic game theory, mathematical logic, deep learning, and reinforcement learning. He started the investigation on AGI/AI safety in 2016 and now focuses on…

Summary

Zhu speaks about the nature of ontological crisis – a state of reality shift for either human or machine. He then transitions into the ontological anti-crisis, and how to use such a phenomenon to increase safety for artificial general intelligence.

Presentation: Ontological anti-crisis and AI safety

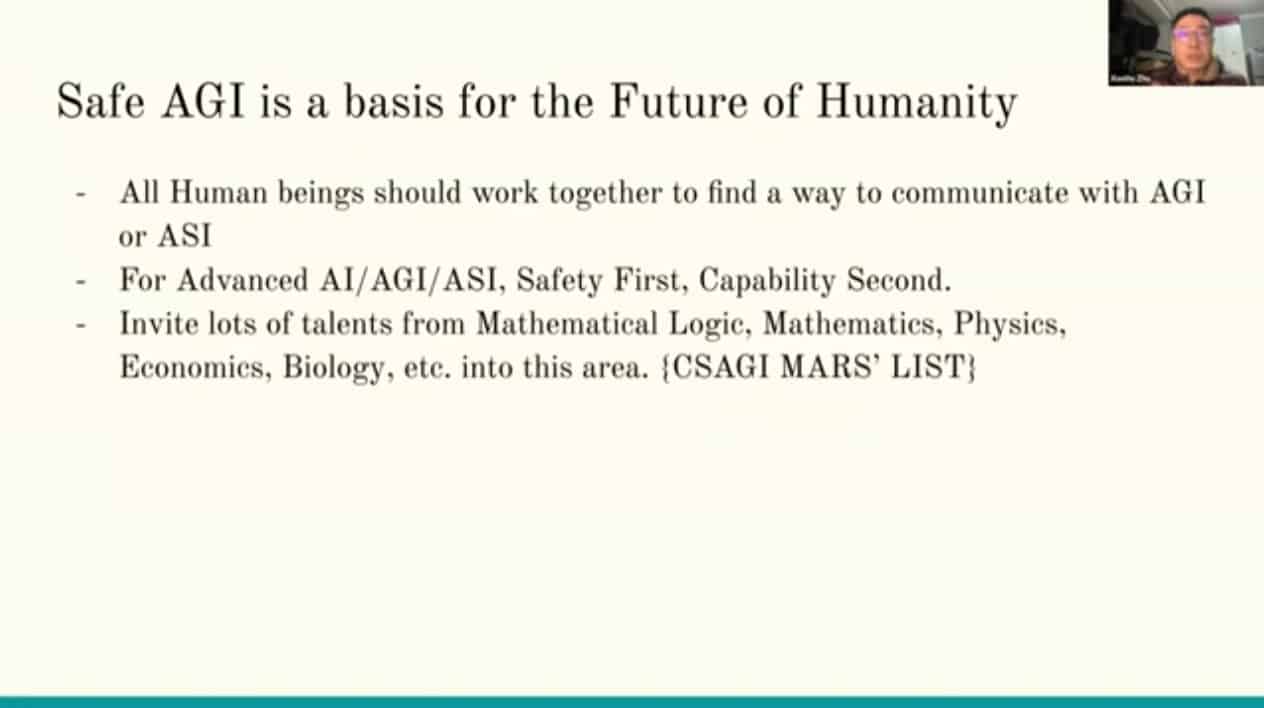

The center for safe artificial general intelligence uses partnerships in China to investigate methodologies for developing AI safely.

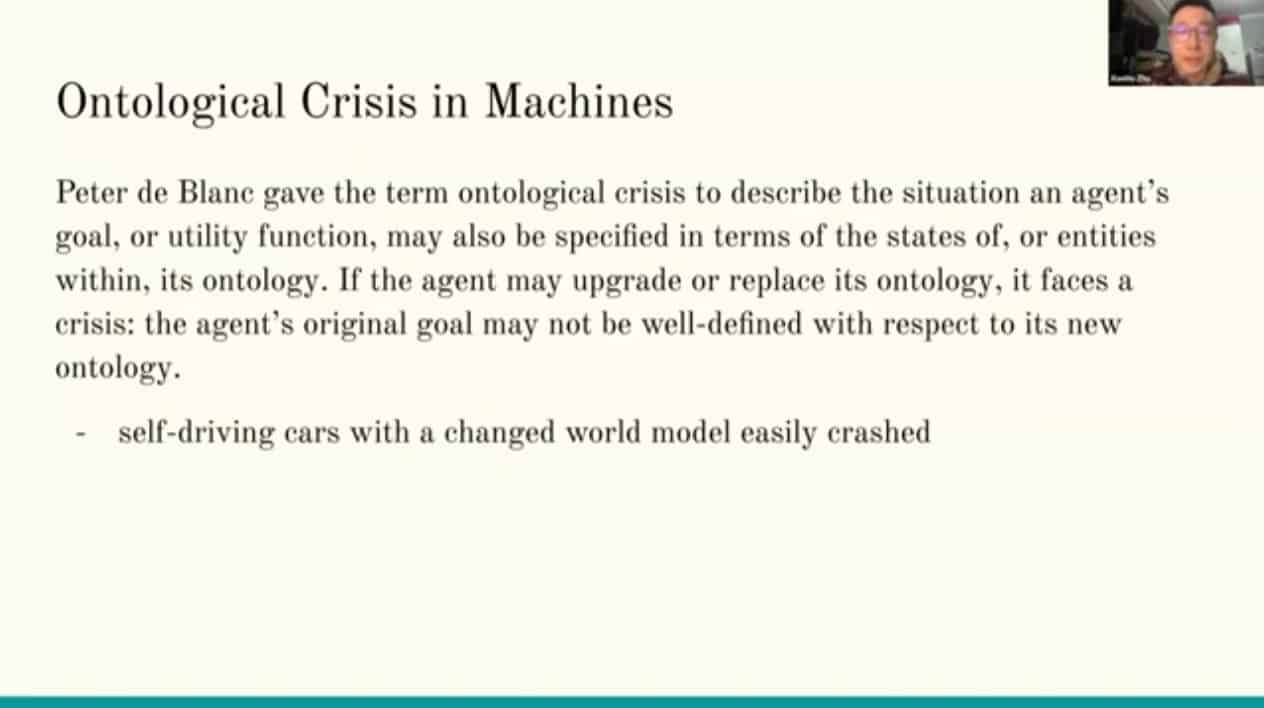

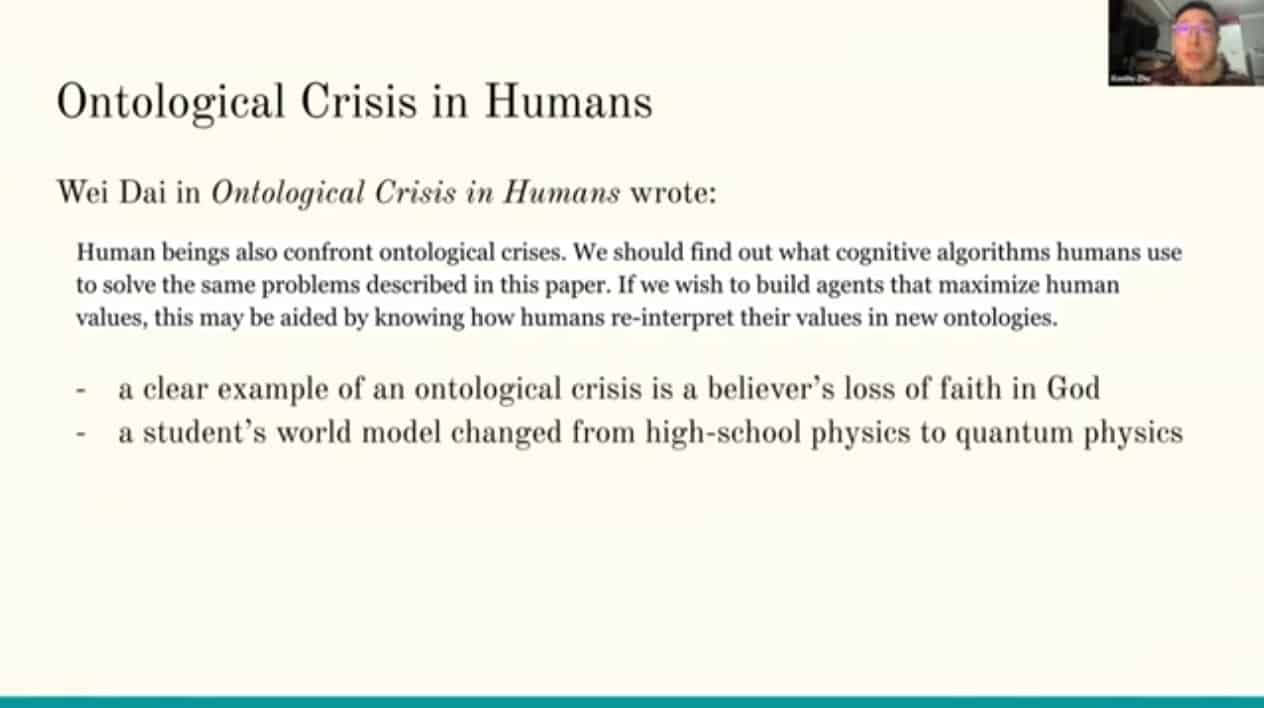

The (extreme) change of model of the reality for an entity

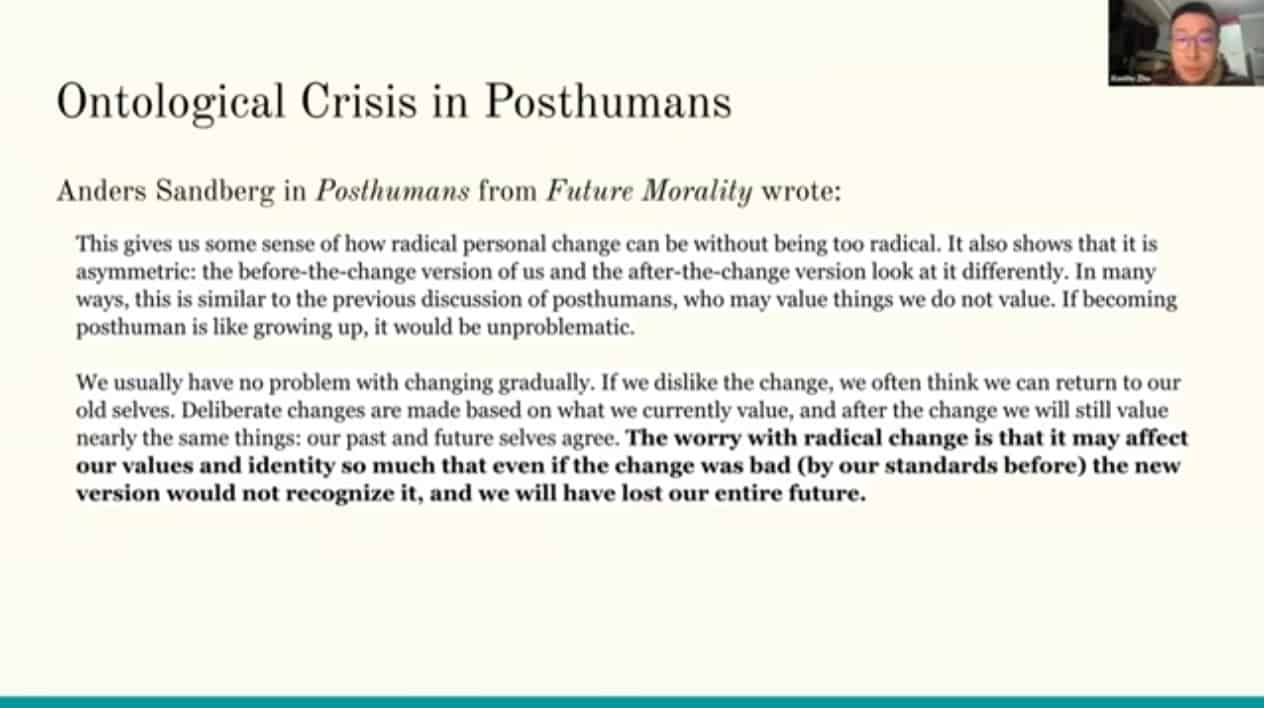

Humans, machines, and posthumans can undergo ontological crisis.

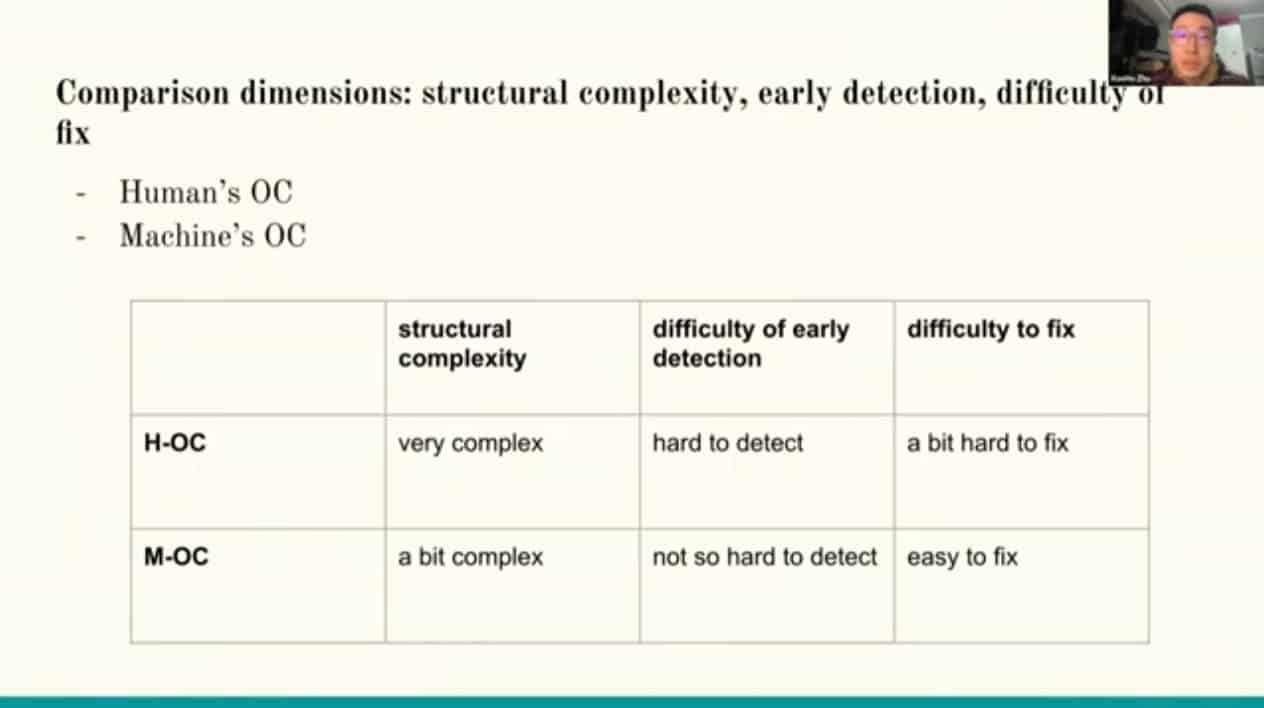

Decision diagram – machine ontological crisis is likely easier to fix.

The ontological hierarchy is a product of how ontological progress is built into the human or machine condition.

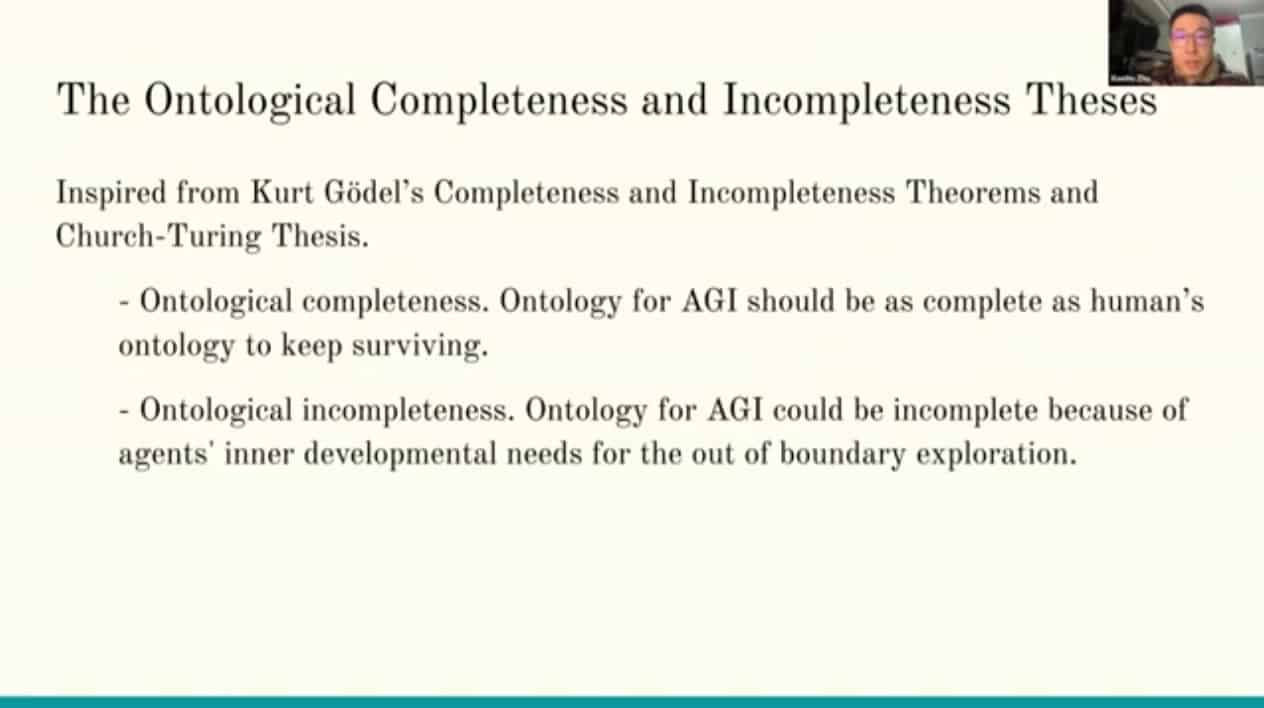

Ontological completeness and incompleteness Theses for safe AGI

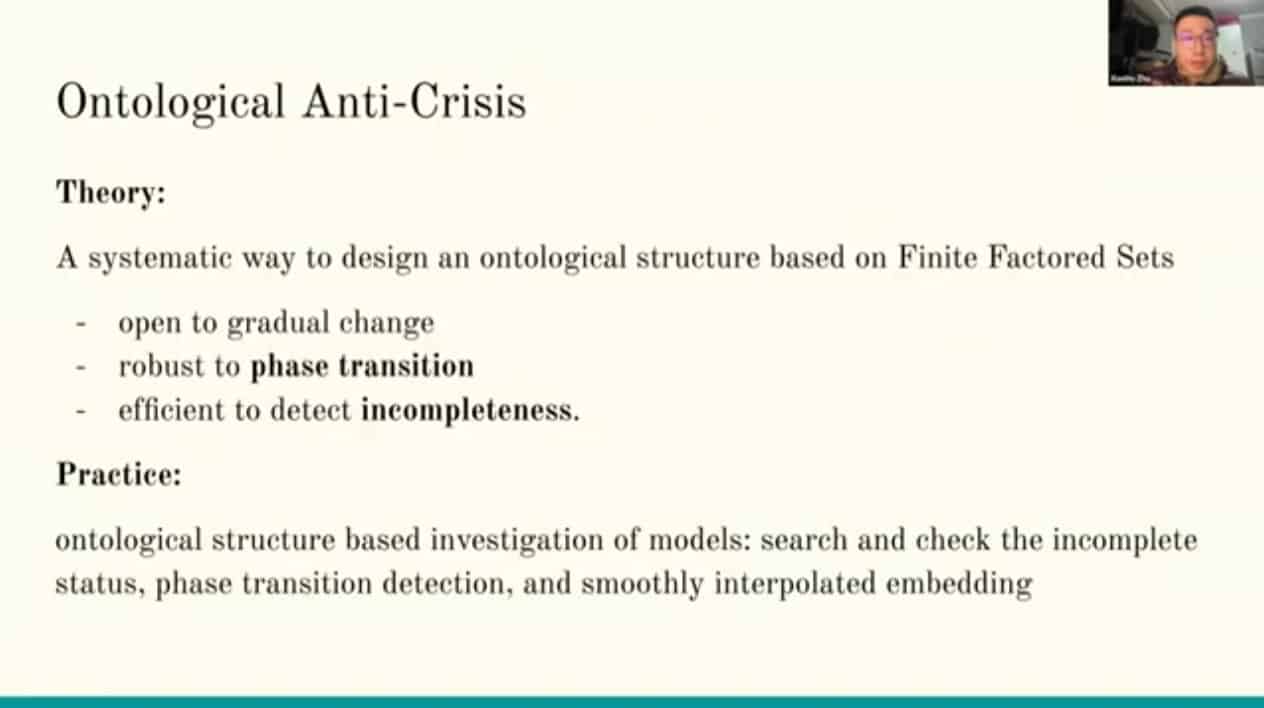

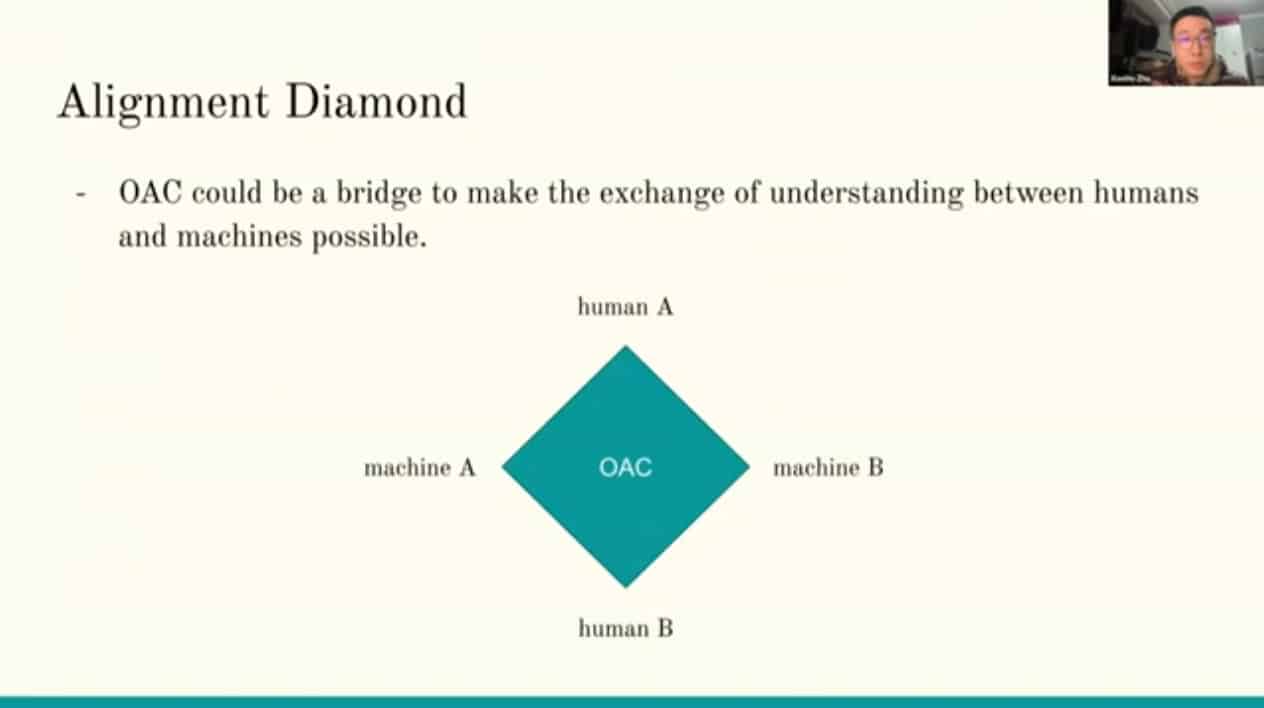

Ontological anti-crisis is a systematic way to design an ontological structure which takes change into account. It can help humans and machines understand each other.

OAC may be able to help with AI safety

Iterations of the OAC theory may be used to improve completeness and create safer interactions with AI